Last Updated: April 21, 2026

If you’ve been using AI tools for a while, you already know the frustration: you find a workflow that clicks, you rely on it, and then one Tuesday morning something changes. A limit tightens. A plan gets restructured. A model you counted on gets sunsetted.

This guide exists to cut through that noise.

What you’ll find here is a current, practical breakdown of usage limits, pricing, models, and features across ChatGPT, Claude, Gemini, Grok, and Perplexity — as of April 2026. It’s not a “best AI” ranking. There’s no winner. Different platforms are better for different things, and your workflow probably looks nothing like the next person’s.

The goal is simple: enough accurate information to pick the right tool (or combination of tools) for what you actually do.

What’s Changed Since Early April 2026

Early April was relatively quiet. Mid-April was not.

Claude Opus 4.7 launched April 16. It’s Anthropic’s most capable generally available model to date — not a minor version bump. Agentic coding improved significantly (SWE-bench Verified jumped from 80.8% to 87.6%), vision now handles images up to 2,576px resolution, and a new “task budget” feature lets agents track a token target and finish cleanly rather than running out mid-task. On the consumer side, Opus 4.7 is now the default in Claude’s model picker. Pricing is unchanged at $5/$25 per million tokens — but a new tokenizer can raise effective token counts by up to ~35% on some workloads, so don’t assume cost parity with Opus 4.6 without testing. Alongside the model, Anthropic launched Claude Design — an Anthropic Labs research preview for creating visual outputs like prototypes, slides, and one-pagers, available to Pro, Max, Team, and Enterprise subscribers.

OpenAI added a $100/month Pro tier on April 9. There’s now a plan between Plus ($20) and Pro ($200). The new tier gives 5x more Codex usage than Plus, with access to the full Pro model suite — same as the $200 plan, just with lower usage headroom. It’s a direct mirror of Anthropic’s Claude Max structure. Through May 31, new subscribers to the $100 plan get a promotional 10x Codex boost. After that date it reverts to 5x.

Anthropic blocked third-party agent access on April 4. Claude Pro and Max subscriptions no longer power external agent frameworks. Tools that were running continuously through your subscription — like OpenClaw — now require pay-as-you-go API bundles or a direct API key. Affected subscribers received a one-time credit equal to their monthly plan cost. If your workflow runs Claude through a third-party tool rather than directly, check whether it’s affected.

Grok 4.3 Beta dropped April 17 for SuperGrok Heavy subscribers. No announcement, no press release — it just appeared in the model selector. New capabilities: native video input, direct generation of PDFs, spreadsheets, and PowerPoint files from conversation, and tighter Grok Computer integration. Architecture retains the 2M context window and 16-agent Heavy system from 4.20. Full rollout expected mid-to-late May 2026.

GPT-5.2 Thinking sunsets June 5, 2026 — about six weeks away. If any workflow still touches it, migrate to GPT-5.4 now.

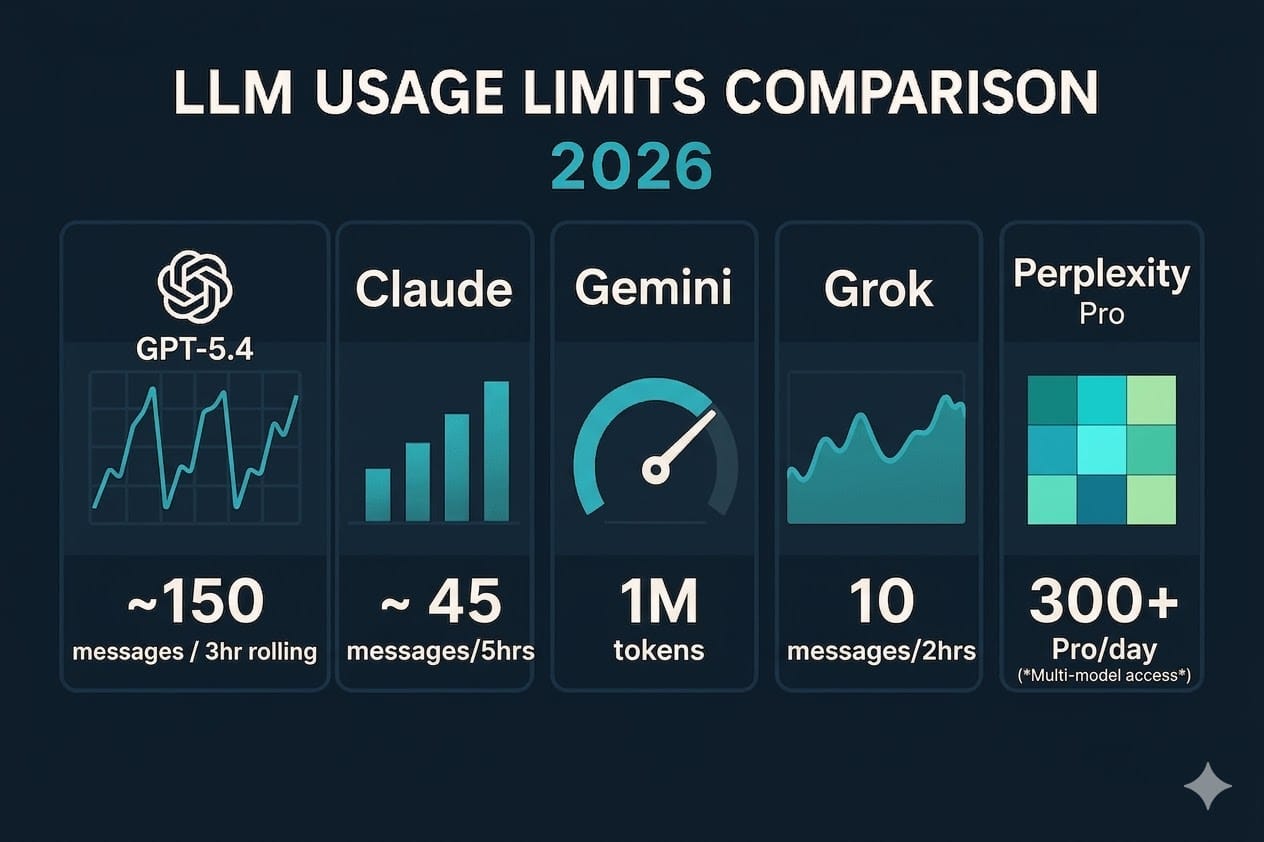

Quick Comparison: All Five Platforms at a Glance

<!– TABLE_PLACEHOLDER –> <!– Paste the HTML table from the companion HTML file here –>

| Feature | ChatGPT OpenAI | Claude Anthropic | Gemini | Grok xAI | Perplexity Perplexity AI |

|---|---|---|---|---|---|

| 🆓 FREE TIER | |||||

| Free Model | GPT-5.4 (limited) | Claude Sonnet 4.6 (limited) | Gemini 2.5 Flash | Grok 4.20 (limited) | Sonar (basic search) |

| Free Message Limit | Limited daily; degrades to lighter model at cap | Limited daily; varies with demand | Limited daily; 2.5 Pro restricted | ~10 requests / 2 hrs (estimated) | 5 Pro Searches/day; unlimited standard |

| Ads on Free Tier | ✗ Yes — US, AU, NZ, CA | ✓ No ads | ✓ No ads | ✓ No ads | ✓ No ads |

| 💳 INDIVIDUAL PAID (~$20/mo) | |||||

| Plan Name | Plus | Pro | Google AI Pro | SuperGrok | Pro |

| Monthly Price | $20/mo | $20/mo ($17 annual) | $19.99/mo (1st month free) | $30/mo | $20/mo ($200/yr) |

| Top Model Access | GPT-5.4 Thinking | Claude Opus 4.7 NEW | Gemini 3.1 Pro | Grok 4.20 + Grok 4.1 | GPT-5.4, Opus 4.7, Gemini 3.1 Pro NEW |

| Context Window | 1M tokens | 1M tokens | 1M tokens | 2M tokens (4.1 Fast) | 200K tokens (Sonar) |

| Deep Research | ✓ 10 runs/mo | ✓ Included | ✓ Expanded access | ✓ DeepSearch mode | ✓ Core strength |

| Image Generation | ✓ DALL-E / GPT Image | ✗ None native | ✓ Imagen / Flow | ✓ Aurora / Imagine | ✓ DALL-E + SDXL |

| Voice Mode | ✓ Advanced Voice | ✓ Included | ✓ Gemini Live | ✓ Voice Mode | ✗ Not available |

| Memory | ✓ All tiers | ✓ All tiers (incl. free) | ✓ Included | ✗ No persistent memory | ✓ Thread history |

| Real-Time Web Search | ✓ | ✓ | ✓ + Google Search grounding | ✓ + live X/web data | ✓ Core product feature |

| 🚀 PREMIUM / POWER TIER ($100–$300/mo) | |||||

| Plan Name | Pro $100 / Pro $200 NEW | Max | Google AI Ultra | SuperGrok Heavy | Max |

| Monthly Price | $100/mo (5x) NEW or $200/mo (20x) | $100/mo (5x) or $200/mo (20x) | ~$42/mo ($124.99/3 months) | $300/mo | $200/mo |

| Key Extra Value | GPT-5.4 Pro model, 5x or 20x Codex, max Deep Research, expanded Sora | 5x or 20x usage vs Pro, persistent memory, Claude Code Auto mode, Claude Design, early access | 25K AI credits/mo, Veo 3.1, Gemini 3 Pro, Jules coding agent, highest AI Studio limits | Grok 4.3 Beta (early access), 16-agent Heavy system, highest rate limits, Big Brain Mode | Perplexity Computer (19 AI models), Comet with Opus 4.7, unlimited Labs, no usage caps |

| 🏢 TEAMS / BUSINESS | |||||

| Team Plan Price | $20/seat/mo annual $30/seat monthly | $25/seat/mo annual $30/seat monthly | $20–$30/seat/mo Workspace add-on | $30/seat/mo | $40/seat/mo annual Enterprise Pro |

| Data Not Used to Train | ✓ Business+ | ✓ Team+ | ✓ Workspace | ✓ Business+ | ✓ Enterprise Pro+ |

| Coding / Agent Tools | Codex (limits) + PAYG Codex seats | Claude Code, Cowork, Dispatch | Jules (async coding agent) | Big Brain Mode, DeepSearch | Perplexity Computer (Max) |

| 🔍 WHAT MAKES EACH DISTINCT | |||||

| Standout Edge | Most features per dollar at $20; now mirrors Claude’s premium tier structure; GPT-5.4 fuses reasoning + coding | Best agentic coding with Opus 4.7; leads on long-context work; Claude Design for visual output | Deepest Google Workspace integration; best value if you live in GSuite | Real-time X data; 2M context window; most permissive content policy | Best for research; cites every source; access to models from all major labs |

| Biggest Limitation | Ads on free/Go tiers; opaque usage caps; pricing actively evolving | No native image generation; third-party agent access now requires API key | API tiers more complex; Ultra’s quarterly billing is awkward | No persistent memory at any tier; no published cap numbers; $30/mo premium vs $20 competitors | Not a creative/writing tool; shorter context window; no voice mode |

Usage limits on free and paid tiers aren’t always publicly disclosed and vary by demand, region, and account history. Data verified April 21, 2026.

April 2026 pricing note: OpenAI and Anthropic now share an identical premium tier structure — $100/month for 5x usage, $200/month for 20x. The differentiator isn’t price anymore. It’s what each platform does with those tokens. Claude leads on agentic coding with Opus 4.7. ChatGPT leads on breadth of features at the same price.

Usage limits on free and paid tiers aren’t always publicly disclosed and vary by demand, region, and account history. Data verified April 21, 2026.

ChatGPT (OpenAI)

OpenAI’s ChatGPT now runs on seven subscription tiers, and the range from free to Pro has never been wider. The free plan gives you GPT-5.4 with a catch: it’s limited, it degrades to a lighter model when you hit the cap, and — as of February 2026 — it comes with ads. Not just in the US anymore either. The ad rollout has expanded to Australia, New Zealand, and Canada. If you’re on the fence about upgrading, that last point might push you.

The Plans

Free ($0) gets you GPT-5.4 access, basic file and image uploads, web browsing, and the ability to use (not create) custom GPTs. The ad-free experience is available but trades usage limits for it. Your conversations may be used to train OpenAI’s models by default — opt out in Settings → Data Controls.

Go ($8/month) is the budget tier, launched globally in early 2026. More message volume than free, same ad situation. What it doesn’t include: advanced reasoning models, Sora, Codex, Agent Mode, or Deep Research. If you use ChatGPT for professional work, skip Go and go straight to Plus. The $12 difference buys you a completely different product.

Plus ($20/month) is where ChatGPT becomes a serious work tool. GPT-5.4 Thinking, 1M token context, Deep Research (10 runs/month), Sora video, Codex, Agent Mode. The price hasn’t moved in three years while the product has expanded substantially. For most individuals doing real work, this is the right tier.

Pro $100 ($100/month) is new as of April 9, 2026 — a middle tier between Plus and the original Pro. You get the same model suite as Pro $200, including GPT-5.4 Pro and unlimited Instant and Thinking access, but with 5x more usage than Plus rather than 20x. The practical difference is ceiling, not capability. If you’ve been bumping into Plus limits but nowhere near what the $200 plan is built for, this is the tier that used to not exist. Note: through May 31, 2026, new subscribers get a promotional 10x Codex boost. After that it reverts to the standard 5x.

Pro $200 ($200/month) remains the top individual tier. Everything in Pro $100, plus 20x usage vs Plus. For genuinely heavy users running long multi-step reasoning or agentic coding sessions daily, the extra headroom matters. Most people won’t need it — but if you’re consistently maxing out the $100 plan, you’ll know.

Business ($20/seat/month, annual) — updated in April 2026, down from $25. Monthly billing runs $30/seat. Includes SAML SSO, an admin console, and conversations not used for training by default. Pay-as-you-go Codex-only seats are also available for Business and Enterprise teams, billed on token consumption with no fixed seat fee. Small teams can pilot AI coding workflows without rolling everyone onto full Business seats.

Enterprise (custom pricing) adds SCIM, SLAs, compliance certifications, and custom data retention. API access is billed separately regardless of which plan you’re on.

Models Right Now

GPT-5.4 launched March 5, 2026 and is a real departure from what came before. It merges the GPT series with the Codex coding models into a single architecture — so you’re not switching between a “reasoning mode” and a “coding mode.” Computer use is native. GPT-5.4 Thinking is available on Plus, Team, and Pro. GPT-5.4 Pro is reserved for Pro and Enterprise.

One important deadline: GPT-5.2 Thinking sunsets June 5, 2026. If your workflows depend on it, start testing GPT-5.4 now. You have about six weeks.

On Usage Limits

OpenAI is notably vague about specific message caps. They describe limits as “may change based on demand and system performance,” which is technically accurate but unhelpful. What’s consistently observed: Plus users have generous limits for most workflows, but complex multi-step reasoning or large-context tasks can eat through them faster. If you’re regularly hitting walls, the new $100 Pro tier is likely the answer before you commit to $200.

Claude (Anthropic)

Claude is the choice for people who care deeply about coding quality and long-document work. The Opus 4.7 release on April 16 extended that lead — it’s the strongest agentic coding model on the market right now by published benchmarks, and it brought a new visual output tool with it. A few things also changed on the access side this month that affect power users directly.

The Plans

Free ($0) provides access to Claude Sonnet 4.6 with daily limits that vary by server demand. Web, iOS, Android, and desktop — no credit card required. Memory from chat history is available on free (Anthropic rolled this to all tiers). Limits are real, though. If you’re doing substantial work, you’ll feel them.

Pro ($20/month, $17/month annual) opens up the full tool suite: Claude Code in the terminal, file creation and code execution, unlimited projects, Google Workspace integration, remote MCP connectors, and access to extended reasoning models including Opus 4.7. About 5x more usage than free, though even Pro has limits — Anthropic doesn’t publish exact numbers. Claude Design, Anthropic’s new visual output tool (Anthropic Labs research preview), is also available on Pro and rolling out gradually.

Max ($100/month or $200/month) is built for people who routinely bump into Pro’s caps. Two tiers within Max: 5x more usage at $100, or 20x more at $200. Max also adds persistent memory across conversations, Claude Code Auto mode — where the agent acts independently without asking for constant confirmation, now available on Max as of April 2026 — early access to new features, and priority access during peak times. If you’re spending hours per day in Claude on intensive tasks, the $100 tier is worth running the math on.

One important change for power users: As of April 4, 2026, Claude Pro and Max subscriptions no longer power third-party agent frameworks. If you were running automated Claude workflows through a tool like OpenClaw, those workflows now require pay-as-you-go API access or a direct API key. Affected subscribers received a one-time credit equal to their monthly plan cost. It’s a real change for anyone who had built automation on top of their subscription — worth checking if your setup is affected before your next billing cycle.

Team ($25/seat/month annual, $30 monthly) adds collaboration features, shared projects, and workspace admin controls. Premium Team seats at $150/month add the full Claude Code developer environment for individual team members who need it. Minimum 2 users.

Enterprise (custom) adds SSO, audit logging, enhanced context, compliance APIs, and institution-wide controls. Self-serve Enterprise plans are now available directly on the website without a sales conversation — a recent change that makes it accessible to smaller organizations.

Models Right Now

Three tiers, each with a clear job:

Haiku 4.5 is the fastest and cheapest — built for high-volume, latency-sensitive tasks where frontier reasoning isn’t required. API pricing: $1 input / $5 output per million tokens.

Sonnet 4.6 is the workhorse. Released February 17, 2026 — Anthropic’s own data shows developers using Claude Code preferred it over the previous flagship Opus 4.5, 59% of the time. Strong on coding, computer use, and long-context reasoning at $3 / $15 per million tokens.

Opus 4.7 is the flagship, released April 16, 2026. Stronger agentic coding with SWE-bench Verified jumping from 80.8% to 87.6%, better long-horizon reasoning, and significantly improved vision with support for images up to 2,576px resolution. A new “task budget” feature lets you set a token target for a long-running agentic loop; the model tracks its own progress and wraps up gracefully rather than running dry mid-task. Available across Claude.ai, API, Amazon Bedrock, Google Vertex AI, Microsoft Foundry, and GitHub Copilot. Pricing unchanged at $5/$25 per million tokens — but a new tokenizer means the same text can use up to ~35% more tokens than on Opus 4.6. Test your actual workload before assuming cost parity.

Opus 4.6 remains available via the API for existing integrations but has been replaced in the consumer model picker.

What’s Worth Knowing

Claude doesn’t generate images. That’s still true in 2026. It’s not coming. If image generation is part of your workflow, you’re pairing Claude with something else — or using Claude Design for the visual work it does handle (prototypes, slides, one-pagers).

The jump from Pro ($20) to Max ($100) is steep with nothing in between for consumer plans. That’s a common frustration, and it remains true. The new $100 ChatGPT Pro tier now makes this gap more visible by comparison.

Claude Code — the terminal-based agentic coding tool — is integrated with VS Code, JetBrains, and the desktop app, and it’s a legitimate reason many developers prefer Claude for coding work. It’s available on Pro and up. The new Auto mode on Max reduces the friction of using it for longer tasks significantly.

Gemini (Google)

Google’s AI subscription lineup went through a naming overhaul earlier this year. What was “Gemini Advanced” is now “Google AI Pro.” What was “Google One AI Premium” is now part of the Google AI plan family. The models underneath improved too — Gemini 3.1 Pro is the current flagship, with Gemini 3 Pro Preview deprecated on March 9, 2026.

If you’re deep in Google’s ecosystem — Gmail, Docs, Sheets, Drive, Meet — Gemini is worth a harder look than most AI comparisons give it credit for.

The Plans

Free gives you Gemini 2.5 Flash, limited access to 2.5 Pro, Deep Research (restricted), Gemini Live voice, Canvas, Gems, and 100 monthly AI credits for video generation in Flow and Whisk. NotebookLM is included. It’s a genuinely useful free tier for casual exploration.

Google AI Pro ($19.99/month, first month free) is the full package for most users. Access to Gemini 3.1 Pro, expanded Deep Research, 1M token context window, 1,000 monthly AI credits, video generation via Veo 3.1, and Gemini integrated directly into Gmail, Docs, Sheets, and other Workspace apps. Higher limits in Gemini Code Assist and the Jules async coding agent. NotebookLM upgrades to 5x more audio overviews. As of April 20, 2026, AI Pro subscribers also get higher usage limits and access to Gemini Pro models in AI Studio — useful if you want to vibe-code before committing to API billing. The free first-month trial is a low-risk way to evaluate the Workspace integration before committing.

Google AI Ultra (~$42/month, billed $124.99 per 3 months) adds access to Veo 3.1 for high-quality video, 25,000 monthly AI credits, the Gemini 3 Pro model for US subscribers, and the highest limits across every feature including Jules and Gemini Code Assist. Ultra subscribers also got expanded AI Studio access on April 20, alongside Pro. The quarterly billing structure is annoying — you’re paying $125 at a time, not monthly — but the effective rate around $42/month slots it below ChatGPT Pro and above Gemini Pro meaningfully.

Workspace add-ons (Business/Enterprise) are separate from consumer Google AI plans and require an active Google Workspace subscription. Pricing varies by tier.

Models Right Now

Gemini 3.1 Pro is the current consumer and API flagship. The previous Gemini 3 Pro Preview was deprecated March 9, 2026. If you’re using any app or tool that relies on that model string, check for migration notices.

Gemini 2.5 Flash is the free-tier workhorse and a strong option for developers — fast, affordable, and capable enough for most real-world tasks.

Gemini 3.1 Flash-Lite launched in March 2026 for developers: $0.25 per million input tokens, 45% faster than 2.5 Flash. The cheapest production-ready API from any major provider right now.

What’s Worth Knowing

Gemini’s real advantage isn’t the model — it’s the integration. The ability to ask your Gmail inbox for an AI Overview, work with Gemini directly inside Docs and Sheets, and push Deep Research outputs straight to Drive is something no other platform matches. If you spend your day in Google’s tools, that integration has compounding value.

Jules — the async coding agent — is still in beta and English-only. Capacity isn’t guaranteed. Worth trying, but don’t build workflows around it yet.

One note for developers building on the Gemini API: Google significantly tightened the free developer tier on April 1, 2026. Pro models including Gemini 3.1 Pro were removed from API free access and are now paid-only. Mandatory monthly spend caps were introduced by billing tier — Tier 1 is capped at $250/month, Tier 2 at $2,000/month. These are API-level changes that don’t affect consumer Gemini app users directly. Also worth noting: Gemini 2.0 Flash and 2.0 Flash-Lite deprecate June 1, 2026 — about six weeks out. If you’re building on either, migration to 2.5 Flash or a 3.x Flash model is worth doing now rather than under deadline pressure.

Grok (xAI)

Grok is the most transparent about what it’s trying to be and the least transparent about how it measures up. xAI publishes almost no specific cap numbers for consumer plans. What it does publish: models that benchmark competitively, a real-time data advantage through X integration, and the largest context window of any platform on this list.

The Plans

Free provides limited Grok access — widely estimated at around 10 requests per two hours, though xAI hasn’t officially confirmed this. Available via grok.com, iOS, Android, and embedded in X. Regional availability varies.

SuperGrok Lite (~$10/month) is a newer entry tier, available in select regions. It unlocks image and video generation through Aurora and Imagine, with moderate message limits. Confirm pricing and availability at checkout — App Store pricing is what xAI treats as the source of truth.

SuperGrok ($30/month) is the main paid tier. Full access to Grok 4.20 and Grok 4.1, DeepSearch for extended research, Big Brain Mode for longer reasoning chains, priority routing, expanded image and video generation, and longer voice mode sessions. It’s $10 more per month than ChatGPT Plus and Claude Pro. That premium is harder to justify unless the real-time X data or the 2M context window is specifically what you need.

X Premium ($8/month) and X Premium+ ($40/month) bundle Grok access with X platform features — blue checkmark, ad revenue sharing, ad-free browsing. If you’re paying for X Premium anyway, Grok access is included. The level of Grok access scales with your X plan tier.

Grok Business ($30/seat/month) adds increased rate limits, no training on your data, team management, Google Drive integration, and audit and security controls.

SuperGrok Heavy ($300/month) is xAI’s top tier, targeting enterprise and research workloads. It provides access to Grok 4.3 Beta (currently in early access), the 16-agent Heavy system, the highest rate limits, and multi-agent capabilities for intensive parallel workflows.

Models Right Now

Grok 4.3 Beta appeared in the model selector on April 17, 2026, without announcement, for SuperGrok Heavy subscribers. New in this release: native video input — the model can now reason about video content conversationally, not just images. It can also generate downloadable PDFs, fully populated spreadsheets, and PowerPoint files directly from conversation. Architecture retains the 16-agent Heavy system and 2M token context window from 4.20. Full rollout to all Heavy subscribers is expected mid-to-late May 2026. If you’re not on SuperGrok Heavy, you can see 4.3 in the model dropdown — you just can’t use it yet.

Grok 4.20 (also labeled Grok 4.2) is the current model for regular SuperGrok subscribers. It’s architecturally different from previous Grok releases: a 4-agent parallel system where specialized sub-agents work simultaneously and cross-verify outputs.

Grok 5 is officially targeting Q2 2026. xAI is training it on their Colossus infrastructure. No new timeline updates this month.

Grok 4.1 Fast is worth knowing for developers: $0.20 per million input tokens, 2M token context window, and benchmark scores competitive with models many times more expensive. Strong option for high-volume applications where cost matters.

What’s Worth Knowing

Grok’s access to real-time X data is genuinely useful for anything trend-adjacent: breaking news, social sentiment, emerging topics. No other platform on this list has that directly.

The 2M token context window on Grok 4.1 Fast is the largest on this list. If you’re working with very long documents and cost is a concern, that’s a real edge.

The lack of published cap numbers is a consistent friction point for users trying to plan workflows. xAI knows this. It hasn’t changed.

One gap that’s harder to overlook at these price points: Grok still has no persistent memory between sessions at any tier — including SuperGrok Heavy at $300/month. ChatGPT and Claude have both had this for well over a year. If you’re evaluating Heavy for high-stakes research or ongoing projects, that’s a real consideration. Storage fees for files and collections on the xAI platform also took effect April 20, 2026 — small cost for most, but worth knowing if you’re storing large knowledge bases via the API.

Perplexity

Perplexity sits in a slightly different category from the others. It’s not trying to be a general-purpose AI assistant. It’s an AI-powered research and search tool that happens to give you access to models from every major lab. That focus is its biggest strength and its most important limitation.

The Plans

Free includes unlimited standard search with citations, 5 Pro Searches per day, basic file uploads, and automatic model selection. Standard search covers 80% of what most people actually use Perplexity for — factual questions, quick lookups, cited overviews. The 5 Pro Search limit is the real constraint.

Pro ($20/month, $200/year) removes the Pro Search cap, adds access to GPT-5.4, Claude Opus 4.7, and Gemini 3.1 Pro within the Perplexity interface, file and document uploads, image generation via DALL-E and SDXL, and API access. The model roster is the key feature — you can run the same query through multiple models and compare outputs without paying four separate $20 subscriptions.

Max ($200/month) is built for intensive research. Unlimited Labs access, early access to new features, the full suite of advanced models, no usage caps on the web interface, and Perplexity Computer — an agentic tool that pulls from 19 different AI models to handle multi-step research workflows. The Comet Browser Agent now runs on Claude Opus 4.7 by default (updated April 2026), with Sonnet 4.5 as an alternative option. The Opus 4.7 upgrade meaningfully improves Comet’s reasoning on complex tasks — analyzing dashboards, digging through codebases, walking through competitor onboarding flows. If you’re doing serious research work daily, this tier deserves a look.

Enterprise Pro ($40/seat/month annual) and Enterprise Max ($325/seat/month annual) add SSO, admin controls, shared Spaces, data retention configurability, and audit logs. Enterprise admins now also have granular feature access controls — including model availability settings and API access toggles — added in April 2026. Enterprise Max is for heavy-workload teams or organizations with compliance requirements.

What’s Worth Knowing

Perplexity’s citation model sets it apart. Every response links to sources, making it possible to verify claims quickly — something that matters in research, journalism, and analysis. Other platforms cite sources occasionally. Perplexity does it by design, and it builds a habit of checking rather than just accepting.

The multi-model access in Pro is underrated. You’re not locked into one lab’s model. Need Opus 4.7’s reasoning depth for a complex analysis? Use it. Need GPT-5.4’s coding ability for a different task? Switch. All from one interface, one subscription. For users who were paying $20/month each for Claude and ChatGPT to access different models, the consolidation argument is real.

The context window is a genuine limitation — 200K tokens on Sonar, compared to 1M+ on the other platforms. For very long document analysis — full codebases, book-length research, large data exports — Perplexity isn’t the right tool. Pair it with Claude or Gemini for that work.

No voice mode. No native image generation (though Pro includes DALL-E / SDXL access). Not built for creative work. These aren’t gaps Perplexity is trying to fill — they’re deliberate choices about what the product is. That focus is what makes it good at research.

Perplexity Computer, the agentic tool that launched in February 2026 for Max subscribers, is now fully rolling out. The concept — 19 AI models working in concert to complete multi-step research tasks, now with Opus 4.7 as the default reasoning engine — points toward where the product is heading. If that matures well, the $200 Max tier looks more defensible a year from now than it does today.

Understanding New Usage Paradigms

Something shifted in 2025 and continued into 2026: platforms stopped competing primarily on “how many messages do you get” and started competing on what happens when you use those messages.

A few things worth understanding as you work through these plans:

Context windows changed the math. A year ago, 200K tokens was impressive. Now all five platforms at their paid tiers offer 1M tokens or more (Grok pushes 2M). What this means practically: the limiting factor for most workflows isn’t context anymore. It’s reasoning quality at scale and what the model does with the tokens it has. A 1M context window is only useful if the model can actually maintain coherence and recall at depth. The 4.7-generation Claude models and GPT-5.4 have made real progress here. Don’t assume a large context window equals good long-context performance — test it on your actual documents.

Reasoning modes cost more compute. GPT-5.4 Thinking, Claude’s extended reasoning, Gemini’s Deep Think mode — these aren’t just different settings. They consume significantly more compute per request than standard generation. Most platforms count these differently against your limits. If you’re hitting caps faster than expected, check whether you’re running reasoning mode on tasks that don’t need it. Simple Q&A, formatting tasks, and basic writing don’t need chain-of-thought. Save the compute for the hard problems.

Agentic tasks accelerate cap consumption. Claude Code sessions, ChatGPT Agent Mode, Perplexity Computer — these multi-step autonomous tasks can eat through a day’s worth of usage quota in a single run. If you’re using agents regularly, the economics of free and even standard paid tiers change quickly. This is why Max-tier products exist. If you’re running a Claude Code session to build a feature across 20 files, you may burn through half your daily Pro allocation in one sitting. That’s not a bug in the pricing — it’s a reflection of how much compute that task actually requires.

The model you get isn’t always the model you pay for. On free tiers especially, platforms route to lighter models during peak demand without always telling you clearly. ChatGPT free degrades from GPT-5.4 to a lighter model at the cap rather than hard-stopping. Gemini free has restricted 2.5 Pro access. Claude free varies by server demand. When response quality suddenly drops in a session, you’ve probably been routed to a smaller model. Paid tiers with “priority access” or “guaranteed availability” language are specifically addressing this.

Weekly vs. daily vs. session caps. Not all limits work the same way. Some platforms reset daily, some by the hour, some by session. ChatGPT typically degrades rather than hard-stops. Claude shows a usage meter and pauses when you’re at the limit. Grok doesn’t publish its numbers at all. Know how each platform manages capacity before you depend on it for time-sensitive work.

Free tiers are real access, not just demos. All five platforms offer genuinely useful free tiers in 2026. The strategic logic: get users into habits with the free tier, convert when they need more. It means you can legitimately do a lot on free if your needs are light. It also means the free tier is designed to show you the product’s best face while keeping you just shy of your actual productivity ceiling.

The Evolution Continues

The AI subscription market is maturing fast, and it’s getting harder to pick wrong at the $20/month tier.

ChatGPT Plus, Claude Pro, and Google AI Pro are all genuinely strong at $20 right now. They serve different workflows better than each other, but none of them is a bad choice. The differentiation is narrowing on raw capability and widening on ecosystem fit, workflow integration, and the specific capabilities you use most.

The premium tier story is more interesting. OpenAI and Anthropic now share an identical pricing structure at the top — $100 for 5x, $200 for 20x. That alignment is new as of this month, and it reframes the question from “how much?” to “which platform does more with your tokens?” Right now, Claude leads on agentic coding with Opus 4.7. ChatGPT leads on features-per-dollar breadth. Google Ultra is underpriced relative to competitors if you use Veo 3.1 and the full model suite. Grok’s $300 Heavy tier serves a niche and still lacks persistent memory. Perplexity’s $200 Max is the clearest value proposition in the premium bracket if research is your primary use case.

Picking the right AI platform in April 2026 comes down to what you actually do with it every day:

- For maximum value under $20: Claude Pro has Opus 4.7 with 1M context and Claude Code. ChatGPT Plus has GPT-5.4 Thinking and the broadest feature set. Google AI Pro includes deep Workspace integration. Any of them is a reasonable answer.

- For heavy coding work: Claude — Opus 4.7 leads agentic coding benchmarks, and the toolchain around it is mature.

- For Google Workspace users: Gemini, without much debate. The integration alone is worth the price.

- For research and fact-finding: Perplexity’s citation model is still best-in-class for this specific job.

- For real-time social/news data: Grok, full stop. Nothing else has live X access.

Staying Current

Usage limits change constantly. What’s accurate today may shift next week.

Monitor your usage. Most platforms now show real-time meters. Check them weekly to understand your patterns before you hit walls.

Follow the changelogs. Each platform has release notes. Bookmark them. Changes drop without warning.

Test before committing. Free tiers let you try frontier models risk-free. Use them to validate fit before paying.

Official Sources

All pricing and feature data verified April 21, 2026:

- OpenAI ChatGPT Pricing — official pricing page

- OpenAI — $100 Pro Plan Announcement — TechCrunch, April 9, 2026

- Anthropic Claude Pricing — official pricing page

- Anthropic API Pricing Docs — model token costs

- Anthropic Release Notes — Claude Opus 4.7, April 16, 2026

- Google AI Subscriptions — Pro and Ultra plans

- Gemini API Pricing — developer pricing

- xAI Grok Plans — consumer plans

- xAI Release Notes — Grok 4.3 Beta, April 17, 2026

- Perplexity Enterprise Pricing — team and enterprise plans

For a deep dive into premium tier economics ($100–$300/month) and whether those plans make financial sense for your use case, see our companion article: Is a $100/Month AI Plan Worth It? Breaking Down the Premium Tier Math (link).

For tactical strategies on getting more out of your current plan before upgrading, see: How to Optimize Your AI Usage and Stop Hitting Limits Early (link).

Last Updated: April 21, 2026 | Explore AI Together